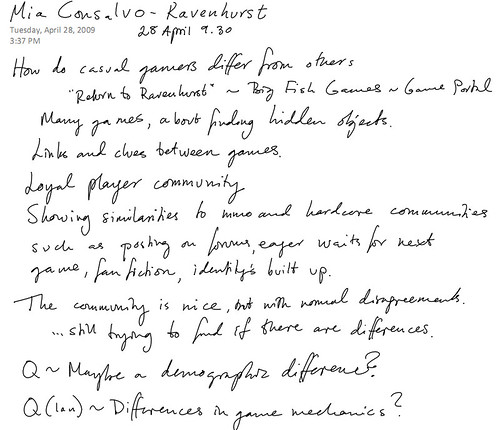

In the demo session we got the chance to look closer at the applications presented at the conference.

The demo session reminds me of the ACE conference, but here at FDG it was more weaved into the program: It was put late, so that one could go and look closer at those applications that had sparkled an interest during the paper presentations. All conferences in this field should do it like this! As you can see in the picture below, the room was packed.

Here is Ken Hullet from the EIS lab, showing his scenario generation app for emergency rescue training games.

Ken is using a hierarchical task network planner that (I asked) can be used for other systems. (Note to self: don’t forget.)

In the corner next to the show of KODU I saw people with space-like goggles on holding a piece of carton with a pattern on. They were looking totally immersed as they were carefully tilting it in different angels. I asked to try, and lo and behold (as Yasmin Kafai would say), when I was wearing the goggles, and looking at the patterned piece of carton things appeared on it! By tilting the carton piece I could steer a ball that would roll in different directions through a maze.

I remember looking at other applications for augmented games. A group at Fraunhofer made the cross media game Epidemic menace, where the goggles provide an overlay of fictional content on the reality through the goggles – the goggle covered only one eye, and through it one could see how the otherwise invisible viruses where moving around in the environment where one was standing.

Another augmented gaming solution that comes to mind is Sony’s The Eye of Judgment that I saw at TGS 2006.

(1)Fraunhofer, Virus game, me trying on goggles, (2) Poster of the epidemic menace game from Fraunhofer, (3) Sony’s the The Eye of Judgement, (TGS 2006)

There are many other systems, but somehow… well that could be me being ignorant, but somehow they don’t seem to fly out to the world. I see an initial presentation, I get enthusiastic, but then they fade into silence. On the other hand: these are early, brave, expensive projects that are heavy on the tech. They fly by showing what is possible. Paving the way.

Now, the system shown at FDG is called Goblin XNA for Augmented Reality research and Games, is open source and builds upon XNA. This combined with that the goggles are cheap makes it suddenly very accessible to work with! Perhaps this could be one of the applications that start trotting along the pavement laid out by sweat, blood and tears of the earlier projects. We will see. For my part I’m putting Goblin into my “future box” (things I want to play with when I’m done with the dissertation).